DeepSeek V4 on NVIDIA Blackwell: A Practical Deployment Guide

DeepSeek V4 changes the conversation from “which model should we try?” to “how do we run long-context AI reliably, securely, and at a predictable token cost?” NVIDIA’s article highlights the raw ingredients: DeepSeek-V4-Pro and DeepSeek-V4-Flash, one-million-token context, hybrid attention, GPU-accelerated endpoints, and Blackwell-class serving performance. This guide turns those ingredients into an implementation plan.

Source note: this guide is based on NVIDIA’s DeepSeek V4 Blackwell article, DeepSeek’s V4 preview notes, and the public Hugging Face model cards. It is rewritten as an implementation playbook for European SMEs and internal platform teams.

Infographic: Infographic

Image URL: https://yltwkehkxfjvzzdmbdja.storage.supabase.co/storage/v1/object/public/cms/media/deepseek-v4-model-chooser.png

What matters in the DeepSeek V4 release

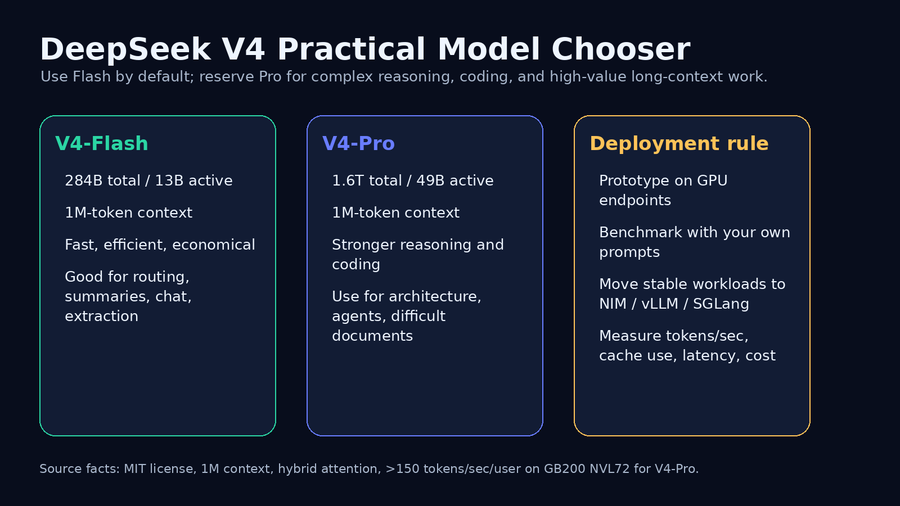

The headline is not only model size. It is the combination of open weights, long context, and inference efficiency. DeepSeek-V4-Pro is the flagship model with 1.6T total parameters and 49B active parameters. DeepSeek-V4-Flash is the smaller efficiency model with 284B total parameters and 13B active parameters. Both support up to a 1M-token context window and are released under the MIT license.

NVIDIA reports that DeepSeek V4’s hybrid attention approach is designed to reduce per-token inference FLOPs by 73% and KV cache memory burden by 90% compared with DeepSeek-V3.2. NVIDIA also reports out-of-the-box DeepSeek-V4-Pro performance of more than 150 tokens per second per user on GB200 NVL72. For production teams, those numbers are a signal: long-context workloads are becoming operationally realistic, but they still need disciplined architecture.

Step 1: Choose Flash first, Pro deliberately

Start with DeepSeek-V4-Flash for high-volume tasks: summarization, routing, classification, extraction, chat, first-pass code review, and document triage. Escalate to DeepSeek-V4-Pro when the task is expensive to get wrong: multi-file coding, architecture decisions, complex reasoning, legal or technical synthesis, and agent planning.

• Default route: Flash for speed and cost.

• Escalation route: Pro for reasoning depth, difficult coding, and high-value decisions.

• Never decide from public benchmarks alone. Use a small internal golden set with your own documents, tickets, code, and policies.

Step 2: Prototype on endpoints before owning the stack

NVIDIA GPU-accelerated endpoints are the fastest way to validate prompts, context length, latency, and model routing without committing to a serving stack. Treat the endpoint phase as a measurement phase, not a demo phase. Capture p50 and p95 latency, tokens per second, prompt size, output size, error modes, and cost per completed workflow.

A useful first sprint is small: 20 representative tasks, three context sizes, two models, and one clear success metric per task. This prevents the common mistake of testing one impressive prompt and assuming the system is production-ready.

Infographic: Infographic

Image URL: https://yltwkehkxfjvzzdmbdja.storage.supabase.co/storage/v1/object/public/cms/media/deepseek-v4-reference-architecture.png

Step 3: Design for one-million-token context without abusing it

A 1M-token context window is powerful, but dumping everything into the prompt is still a bad architecture. Long context should be reserved for cases where continuity matters: repository-wide coding, contract packs, technical documentation, multi-month customer history, incident timelines, or agent memory. For routine tasks, retrieval, summaries, and cacheable context are still better.

• Separate stable context from volatile context. Stable context can be cached or reused; volatile context should stay small and explicit.

• Prefer structured sections: goal, constraints, source documents, allowed tools, expected output, and evaluation criteria.

• Log context length distribution. The average request may be cheap while the worst 5% dominate cost and latency.

Step 4: Pick the serving path: NIM, vLLM, or SGLang

After the endpoint pilot, choose the production path based on operational requirements. NVIDIA NIM is attractive when teams want packaged, enterprise-oriented deployment. vLLM is strong for high-throughput serving and broad ecosystem support. SGLang is useful for structured generation, agent workflows, and serving patterns where programmatic control matters. The right choice is the one your team can monitor, upgrade, and secure.

For WerkHub-style on-premise deployments, the decision is also about data boundaries. If documents, code, designs, or customer records cannot leave the company network, the production target should be an internal GPU server or appliance with strict identity, logging, and backup policies.

Step 5: Measure the bottleneck that actually matters

Long-context inference has several bottlenecks: prefill time, decode speed, KV cache memory, concurrency, retrieval quality, and human review time. Do not optimize only for raw tokens per second. A slower but more accurate model can be cheaper if it avoids retries. A faster model can be better if a router escalates only hard cases to Pro.

Infographic: Infographic

Image URL: https://yltwkehkxfjvzzdmbdja.storage.supabase.co/storage/v1/object/public/cms/media/deepseek-v4-deployment-checklist.png

Step 6: Add governance before agents get autonomy

DeepSeek V4 is positioned for agentic workflows, but agents amplify both value and risk. Before connecting a long-context model to tools, define what the agent may read, what it may write, which actions require approval, and where the audit trail lives. In European SME environments this is not bureaucracy; it is the difference between a useful assistant and uncontrolled shadow automation.

• Read permissions should follow existing company roles.

• Write actions should start behind human approval.

• Every production workflow needs prompt, source, output, user, timestamp, and tool-action logs.

A practical 30-day rollout plan

Week 1: collect representative tasks, define data classes, and create a golden evaluation set. Week 2: test Flash and Pro through GPU-accelerated endpoints and measure latency, quality, and cost. Week 3: choose one production path and run a controlled internal pilot. Week 4: add monitoring, access control, fallbacks, and user guidance before expanding to more teams.

The main lesson from NVIDIA’s DeepSeek V4 article is practical: frontier open models are no longer just research artifacts. With efficient attention, Blackwell-class hardware, and production serving frameworks, the hard part shifts to infrastructure strategy, data governance, and disciplined rollout.